Automation & AI, Business Intelligence, Data Strategy

Data Quality: Boosting Data Accuracy by 60%

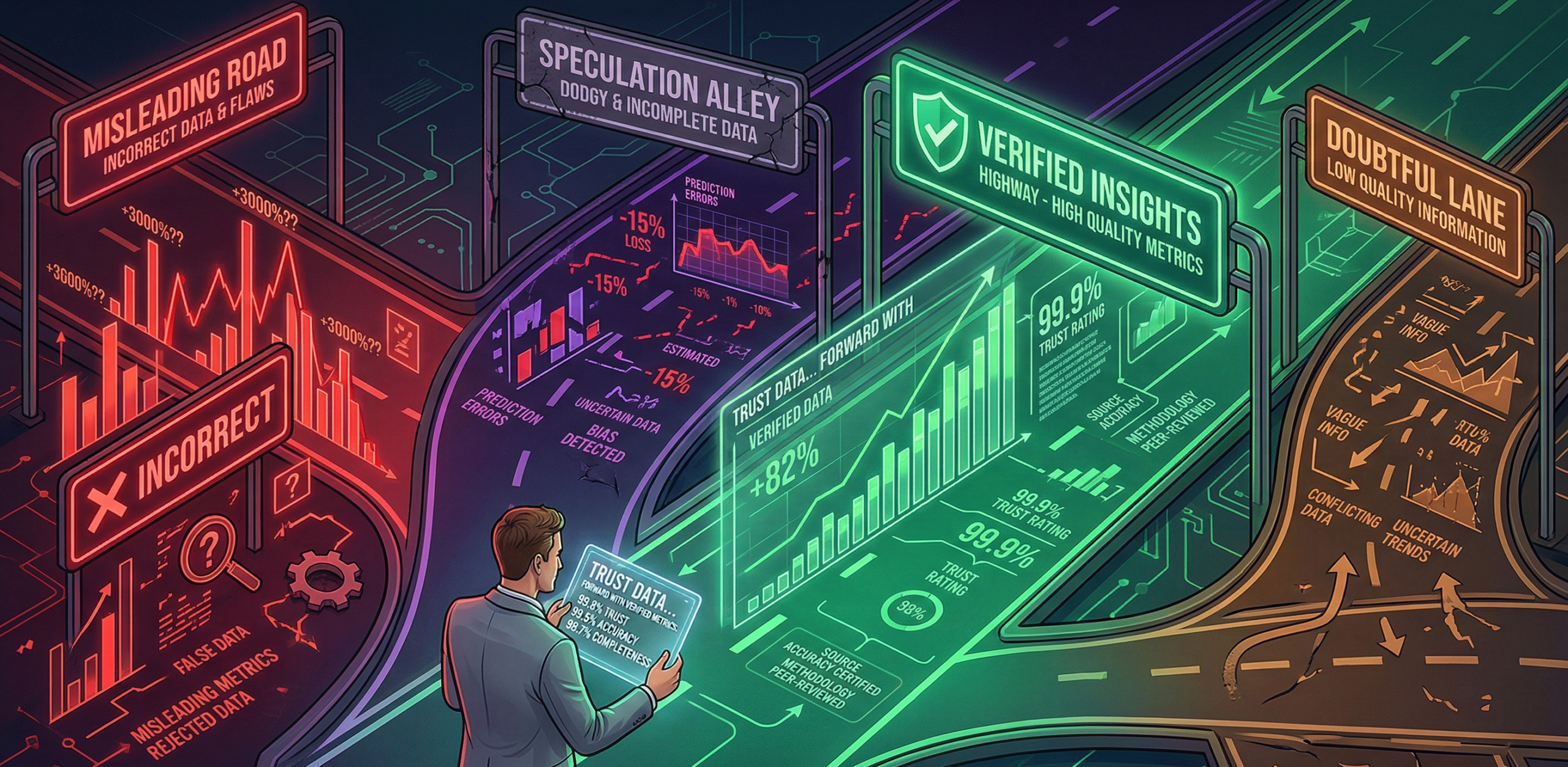

Information is as useful as the data that is being inputted. At this firm, human error and inconsistent training were turning their data into an issue. This wasn’t just a problem for the IT and data engineers. It was also proving a major risk for regulatory submissions and internal audits. They were spending more time cleaning the data than actually using it.

In this instance I didn’t want to just fix the mistakes after they happened. My aim was to prevent them. I designed a “Quality Gatekeeper” system that categorises data issues by their criticality. My goal was to create a feedback loop: the system identifies the error, protects the Data Warehouse from ‘dirty’ data and lastly makes the team learn from the mistake in real-time.

I engineered a custom Data Quality Store and reference mapping structure. The system automatically checks every entry. If it’s a minor slip, the system explicitly cleans the data before entry. If it’s a critical error, it pulls the entry aside keeping the whole process intact and all entries correct for the main warehouse. When a critical error is caught, the individual who entered it gets an immediate alert with a simple explanation on how to fix it. I built a high-level MI dashboard for management, showing exactly where the most common errors were originating so we could target training where it was needed most.

– 60% Cleaner Data: We saw a massive jump in overall data quality almost immediately.

– 50% Less Audits: Because the data was clean at the source, the time spent on pre-regulatory cleansing and audit sampling was cut in half.

– The team moved from dealing with data errors to informed insights. This drastically reduced the need for repetitive training.

- Category :

Automation & AI, Business Intelligence, Data Strategy

- Date :

Mar . 25 . 2026