Why the Jack the Ripper case is a masterclass in poor data strategy.

Everyone, through books, movies, tv series, podcasts, games or any other form imaginable has come across the name Jack the Ripper. A fair few, counting myself in that number, have a deeper interest in the Ripperology phenomenon.

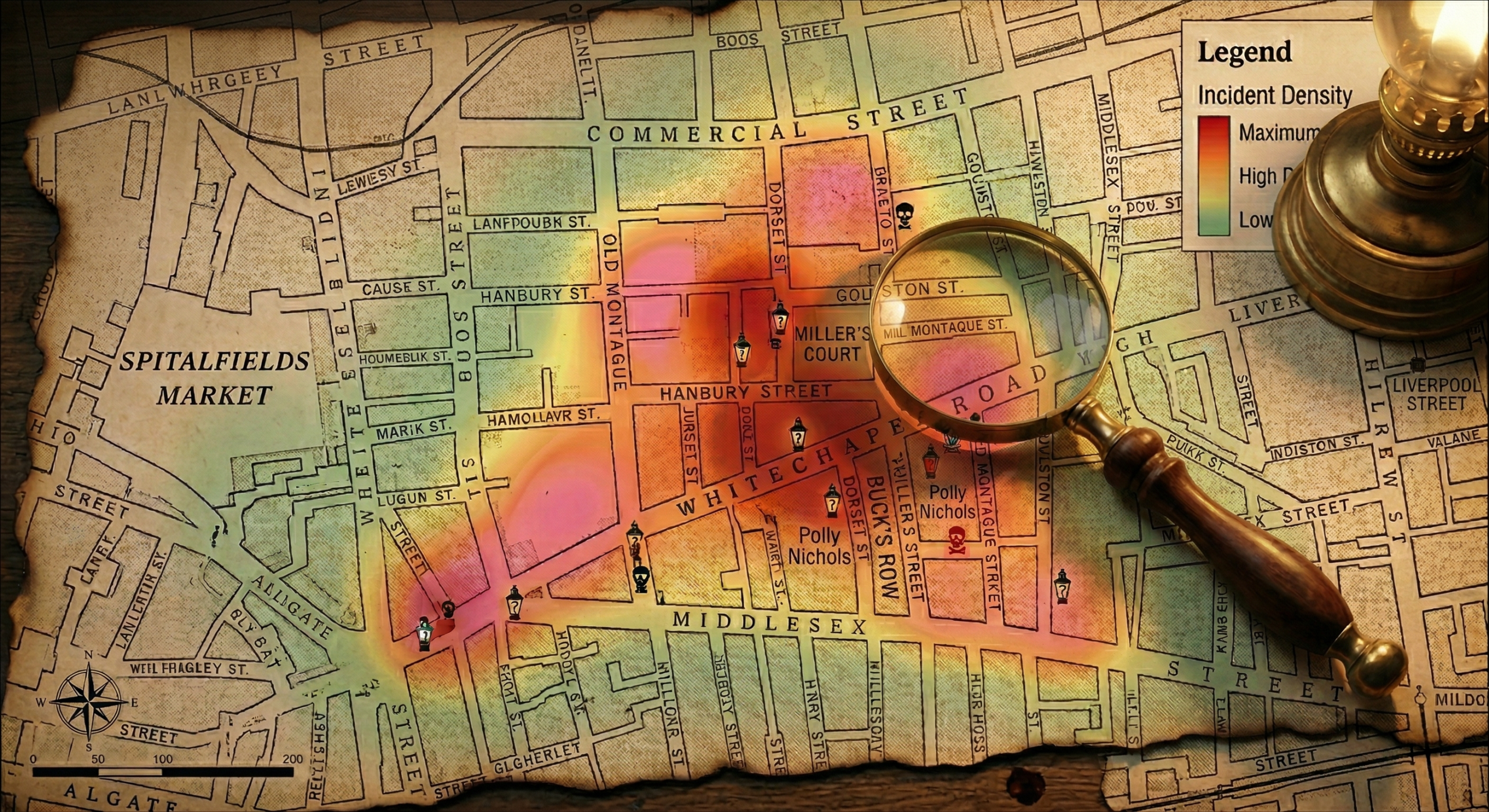

Taking you back to an era which I personally find captivating. The year is 1888. A thick, yellow “pea-souper” fog descends on the East End of London. In the shadows of Whitechapel, a figure, has murdered his last victim, Mary, from the Canonical five; then vanishes. He left a trail of violence and even more mystery that has never cooled down for more than 135 years.

(A post with more details and the highlights of the case will be coming soon…)

I spend most of my days looking for patterns, building architecture and dealing with messy inputs. As a student of dark history, I am drawn to the Whitechapel Murders. Not simply because of the lure of mystery, neither because Jack the Ripper is the ultimate killer, but this whole case book is the ultimate failed data project.

The Null Value

We are obsessed with the Ripper because he represents a total lack of resolution. In data terms, he is a Null Value in the primary key of 19th-century crime. The media at the time set a complete biased algorithm. The infamous “Dear Boss” letter, the depictions and creation of the persona himself that the papers created made a complete bias, one which more probable than not added skewed the information beyond breaking point.

Because the data is so incomplete, the public or end user can project and interpret any identity onto the killer (royalty, butcher, immigrant etc.). This then creates the ultimate unsupervised learning problem that does not have a proper foundation.

The audit: What is definitely missing from a strategic view

If I were auditing the Metropolitan Police and the City of London Police in 1888, my report would highlight three critical systemic failures.

Poor Interoperability – Whitechapel sat on a border, a jurisdiction border. Evidence found by the City Police often didn’t reach the Met in a usable format. In modern terms, they were running on disparate, disconnected legacy systems with no shared API. A simple yet common Silo problem.

Data Integrity – Hours after the murder of Elizabeth Stride and Catherine Eddowes a message in Goulston street was found chalked to a wall with the words “The Juwes are the men that will not be blamed for nothing”. With racial tensions on the rise, the then policy commissioner Sir Charles Warren ordered it to be wiped clean before any photographic evidence is taken. This is a hard delete of a primary record before metadata could be captured. They prioritised reputational risk over data retention, effectively blinding the investigation.

Sifting through noise data – The policy were flooded with hundreds of letters and so called eyewitness accounts. Without any verification layer (unfortunately forensics where lacking at the time), the noise to signal ratio or rather the differentiation between clean data and noise was overwhelmingly high. This also meant that they could not authenticate the killer signature’s movement from copycats.

Replicating the Instance Today: The Data-Driven Outcome

What happens if a similar pattern emerges in 2026? If we ignored the same areas, the data sharing and integrity, the outcome would most definitely be identical, a cold case scenario. The only difference is that with a modern data architecture, the story line is somewhat more complex:

The Interoperability concern – In 1888, there was a gap between the City and Metropolitan police. Today that gap occurs between public and private data. If a killer today targets specific blind spots, it will make things much more difficult to capture. Such blind spots include encrypted burner apps, rented vehicles under stolen or false identities and cash only transactions.

While the police have CCTV, they cannot access private encrypted messaging or real-time location-based tracker from private companies unless there is a warrant process for each type of tracking event. Therefore, if the killer moves faster than the legal system then this gap causing a leak in the digital footprint, that may be lost.

Data Integrity – The killer leaves a red herring or “noise” on a digital platform to divert attention or implicates a sensitive political group, agenda or corporation. This then might lead to platform misappropriation, unnecessary hot discussions and topics that may lead to a Hard deletion of the evidence to restrain the frenzy. By deleting the data point before it can be deeply analysed, the investigation may be neutralised, and conspiracy theories arise. Something which is more dangerous than the killer himself.

AI as a form of media and sensationalism – As soon as the first murder goes viral, “Trolls” and clout-chasers would flood social media and digital platforms with theories, Generative AI videos and fake photos. The Inference engines the law enforcements have would start getting overwhelmed. Not because they are not capable but because they would have to spend more time debunking AI hallucinations rather than actual leads.

The Modern look at things would be something of the following:

Geographic Profiling – Instead of simply looking for “a man in a hat,” we apply Rossmo’s Formula to the GPS pings, IoT sensors, CCTV timestamps and connectivity signals. We identify the main anchor point. Where would the likely residence of the subject be based on the spatial probability of the crime scenes.

Automated Cross-Referencing – A centralised Data Lake would ingest reports from every borough in real-time. A suspicious incident in East London is instantly correlated with a broken window, emergency call or even an automated intruder alert in North London through NLP (Natural Language Processing).

Forensic Telemetry – In 1888, the fog was a literal barrier. Today, there is no digital fog. Even if a killer leaves no physical DNA, they leave a digital shadow: a lack of a phone signal where there should be one, or a specific transit card tap-in that deviate from a 5-year pattern.

From a mythical tale to a tangible value

The lure of the Whitechapel murders, the discussions, the latest insight of DNA sampling in the Jack the Ripper case is fascinating but also a cautionary tale. It keeps a constant reminder that no matter how much data we have, if the architecture we are working with is fragmented or broken, then the whole integrity of the case or project is compromised. It will be endlessly more difficult to get a proper resolution. It will simply vanish just as Jack did.